AI Maturity: AI Readiness & Early Foundations. Why Governance Now Matters

April 15, 2026

6 mins read

Recent Posts

From Experimentation to Structure: GDPR, Data Sovereignty and Responsible AI for Small and Medium Sized Enterprises

Most SMEs do not move from not using AI to using it through a deliberate strategy.

They drift there.

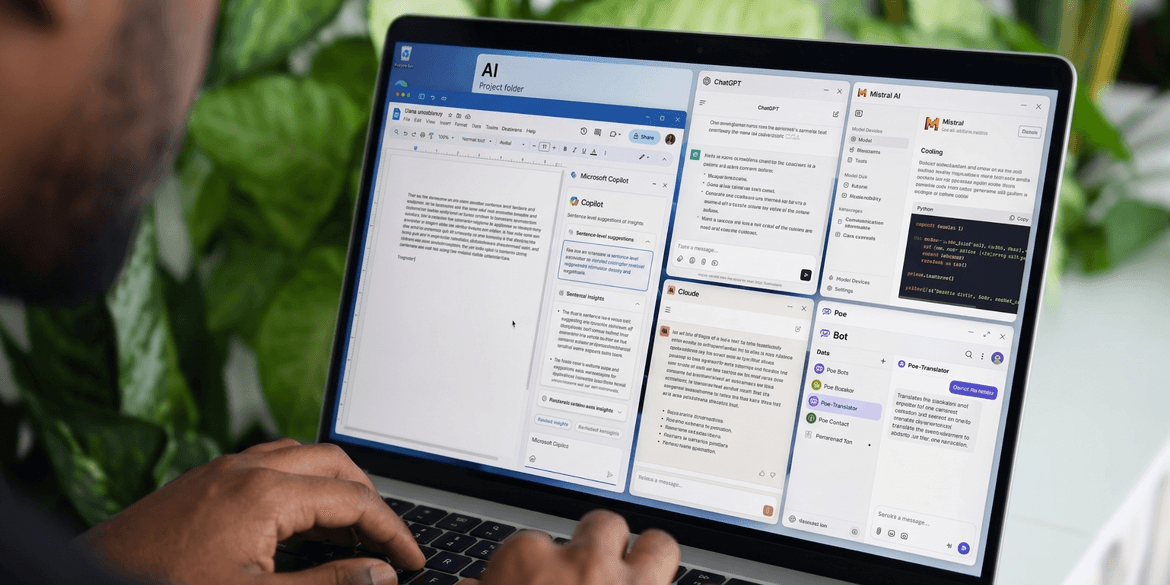

A marketing team experiments with generative AI to produce content. Sales teams begin drafting proposals with AI tools. Operations teams automate elements of reporting.

Over time, AI becomes part of day-to-day activity across the business.

The shift is subtle, but meaningful. What starts as individual experimentation gradually becomes embedded in how work gets done.

The challenge is that this rarely happens within a defined framework. There are no clear policies. No agreed rules around data usage. No governance structure in place.

This is now one of the most common stages of AI maturity for small and medium-sized businesses.

AI is present, but it is unmanaged. Early experimentation is not the issue. In many cases it is necessary.

The risk emerges when usage expands without visibility, without structure and without clarity on how company and client data is being handled.

This is the point where AI moves from curiosity to responsibility. And it is where the serious conversation about AI for organisations begins.

What AI Readiness and Foundations Actually Looks Like

At this stage, AI is already part of the organisation, but it has not yet been formalised.

The patterns are usually consistent.

Teams are using generative AI tools to support everyday tasks. AI capabilities are embedded within CRM, finance, HR or marketing platforms.

Basic workflow automation is being introduced through SaaS tools. Leadership oversight is limited or absent.

For smaller businesses, usage is often informal and driven by productivity. Individuals adopt tools because they help them work faster.

For medium-sized businesses, the picture becomes more complex. AI is often being used across multiple departments, but in ways that are fragmented and uncoordinated.

The common issue is not adoption.It’s visibility.

AI is already influencing how work is done, but the organisation does not yet have a clear understanding of where it is being used, how it is being used or what data is passing through those systems.

This is the point at which governance becomes necessary.

The Real Risk for Businesses: GDPR and Data Sovereignty

As AI tools become part of everyday business activity, regulatory considerations become more relevant.

Under UK GDPR, organisations are required to process personal data lawfully, transparently and securely.

When employees use external AI platforms, this introduces a new set of questions.

Where is the data being processed? Is information stored or retained by the platform? Could client or customer data be exposed to third parties?

Many widely used AI tools operate globally. This means data entered into these systems may be processed or stored outside the UK.

For organisations operating in regulated sectors such as legal, financial services, healthcare or public sector supply chains, this becomes particularly important.

Data sovereignty, which relates to where data is physically stored and which legal jurisdiction governs it, can have direct compliance implications.

The challenge for organisations is that these risks are often not visible during early adoption. Teams may be using powerful tools without fully understanding how data flows through them.

For medium-sized businesses, this quickly becomes a leadership-level concern. As usage increases across departments, so does the potential for regulatory exposure and reputational risk.

Responsible AI adoption therefore requires a shift from informal experimentation to structured governance.

The Shift from Experimentation to Policy

Moving from basic usage to structured adoption does not require a large transformation programme. For most organisations, governance begins with a small number of practical steps.

Define Acceptable Use

Organisations should establish clear guidance on what information can be entered into AI tools and what should not. This typically includes restricting the use of sensitive client data, confidential business information and regulated personal data. Clarity at this stage prevents unintentional exposure later.

Map AI Usage Across the Business

Leadership teams should build a simple picture of where AI is already being used. Which teams are using AI tools? Which platforms include embedded AI capabilities? What types of data are being processed?

This does not need to be complex, but it does need to exist. Visibility is the foundation of control.

Review Vendor Relationships

Where AI tools interact with company data, it is important to understand how those vendors operate. This includes reviewing data processing agreements, understanding whether data is used for model training and identifying where data is stored.

For many organisations, this step is often overlooked but becomes critical as usage scales.

Assign Accountability

AI usage should not sit informally within a single department. Responsibility needs to be defined at a leadership level.This ensures that AI adoption aligns with broader organisational priorities, risk management practices and compliance obligations.

Governance at this stage is not about restricting innovation. It is about enabling it safely.

Breaking Down the Governance Fear

For many business leaders, the concept of AI governance immediately suggests complexity.

It can feel like something designed for large enterprises with dedicated compliance teams.

In reality, governance at this stage can be straightforward. It may involve a short internal policy outlining acceptable AI use.

A simple approval process before new tools are adopted. Periodic reviews of which platforms are being used across the business.

These steps introduce structure without slowing progress. They replace uncertainty with clarity.

For organisations beginning to scale their use of AI, this often becomes the difference between confident adoption and unmanaged risk.

Small vs Medium-Sized Businesses at AI Readiness and Foundation stage

The risks associated with this stage vary depending on organisational size.

For smaller businesses, the challenge is typically informal experimentation. Individuals adopt tools independently without considering how company or client data may be exposed.

For medium-sized businesses, the issue is scale. Multiple departments may be using AI tools simultaneously, creating fragmented usage patterns and increasing compliance exposure.

As organisations grow, governance becomes more important. What begins as a productivity tool can quickly become embedded in core operations.

Governance as a Competitive Advantage

Over the next few years, the conversation around AI will shift. It will move from capability to responsibility.

Clients, partners and regulators will begin asking more detailed questions.

How is AI being used within the organisation? How is sensitive data protected? Where is information stored and processed?

Organisations that can answer these questions clearly will build trust more quickly.

Governance should not be seen as a barrier to innovation. In many cases, it becomes a differentiator.

Organisations that combine practical AI usage with clear policies and responsible data practices are better positioned to scale safely.

The question is no longer whether SMEs are using AI. It is whether they can demonstrate that they are using it responsibly.

In the next article, we move to the next stage of the maturity journey.